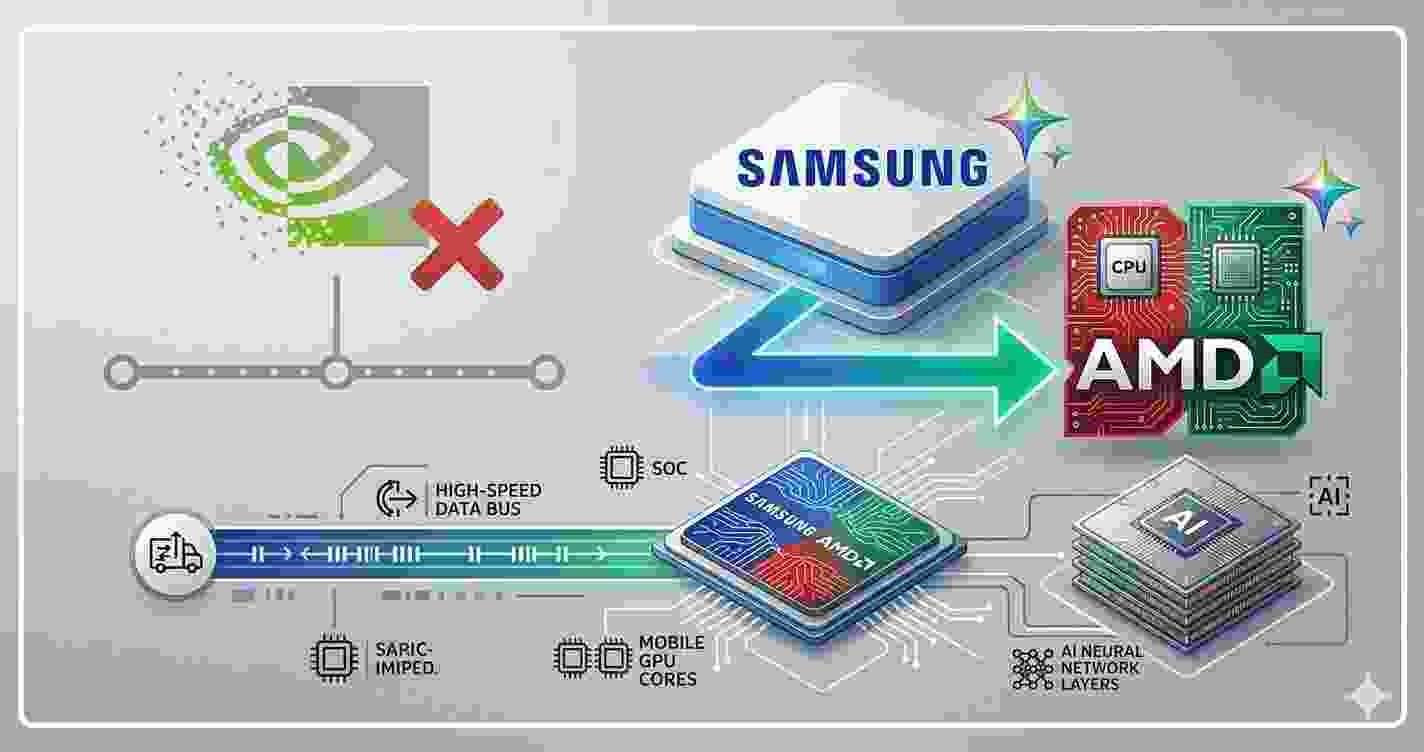

Samsung Just Chose AMD Over Nvidia. Samsung just made a choice that sends a very clear message to Nvidia.

Bloomberg reported today that Samsung Electronics has agreed to supply next-generation AI memory chips to AMD — a deal that positions Samsung and AMD as direct partners in the race to challenge Nvidia’s dominance over the AI chip market.

The timing is not accidental. And the implications go well beyond two companies signing a supply contract.

What the Deal Actually Is

The specifics center on HBM — High Bandwidth Memory — the specialized memory chips that sit directly on AI processors and allow them to handle the enormous amounts of data that large AI models require.

HBM is not a commodity. It’s one of the most technically demanding chips to manufacture, and only three companies in the world can make it at scale: Samsung, SK Hynix, and Micron. Nvidia’s current dominance in AI chips is partly built on its access to SK Hynix’s HBM supply — the two companies have had a tight relationship that helped Nvidia’s H100 and H200 chips become the standard infrastructure for AI training worldwide.

Samsung partnering with AMD is a direct counter to that arrangement. AMD gets access to Samsung’s manufacturing scale and expertise. Samsung gets a major customer for its HBM business that isn’t dependent on Nvidia’s goodwill. Both companies get a credible path to competing with the world’s most valuable semiconductor company.

Why AMD Is Finally a Real Contender

AMD has been trying to break into the AI training market for years. Its MI300X chip launched to genuine praise from technical reviewers — but struggled to gain traction simply because the AI industry had already standardized on Nvidia’s CUDA software ecosystem.

Switching from Nvidia to AMD isn’t just a hardware swap. It means rewriting software, retraining teams, and rebuilding workflows that have been optimized for Nvidia’s tools over years. Most companies looked at that cost and decided it wasn’t worth it — even when AMD’s hardware was genuinely competitive on paper.

That calculation is starting to change. As AI spending has ballooned into what Bloomberg describes as “a vast liability hanging over financial markets,” companies are looking much harder at whether Nvidia’s premium pricing is justified — or whether AMD offers comparable performance at a meaningfully lower cost.

Samsung’s HBM supply agreement gives AMD the memory infrastructure to close the remaining hardware gap. The software ecosystem problem is still real. But it’s getting smaller every quarter as AMD invests heavily in its ROCm software platform.

The Broader Chip War Context

This deal lands the same week that Jensen Huang unveiled Nvidia’s agentic AI strategy at GTC 2026 — a keynote designed to cement Nvidia’s position as the infrastructure layer for the next wave of AI deployment.

The message from AMD and Samsung today is essentially: not if we have anything to say about it.

As we covered in our breakdown of Nvidia’s GTC 2026 keynote — Nvidia’s strategy increasingly depends on locking customers into its full stack, from chips to software to networking to data center design. The more complete that stack becomes, the harder it is to replace any piece of it.

Samsung and AMD are betting that enough customers will want an alternative badly enough to do the work of switching. Big hyperscalers — Amazon, Google, Microsoft — have already been building their own custom AI chips partly for exactly this reason. They don’t want to be permanently dependent on a single supplier for the infrastructure their entire business runs on.

What This Means for the Average Person

AI chip wars sound distant until you realize they directly determine how fast AI tools improve, how much they cost, and which companies can afford to build them.

Nvidia’s pricing power — which is substantial right now — exists partly because there’s no credible alternative for customers who need to train large AI models at scale. Every dollar AMD and Samsung invest in changing that dynamic is a dollar invested in eventually making AI infrastructure cheaper and more competitive.

That benefits everyone who uses AI tools — which at this point is most people.

Also read: Nvidia Just Pulled Its Money Out of OpenAI and Anthropic

Word count: ~580

Reading time: 3 min

Internal links:

- Nvidia GTC 2026 article — chip context

- Nvidia investments article — Nvidia strategy context

External links:

- Bloomberg — Samsung AMD deal breaking news today

- Tom’s Hardware — HBM memory technical details